Artificial Intelligence (AI), a transformative technology that simulates human learning, decision-making, and creativity, has evolved significantly since its inception in the 1950s. By the 1980s, machine learning introduced ‘expert systems’ that leveraged historical data. The 2010s marked the rise of deep learning, enabling machines to mimic human brain functions. This technological leap was driven by major corporations seeking to enhance efficiency and productivity, particularly through the vast data generated by social media platforms. AI’s unique ability to reshape societies, economies, and education systems sets it apart from traditional digital technologies, but it also raises critical ethical and social challenges, including fairness, transparency, privacy, and accountability. As AI becomes increasingly integrated into education, systems worldwide are grappling with its implications. Educators emphasize that AI should support, not replace, human decision-making and intellectual development, while respecting human rights and cultural diversity. In the absence of a national policy framework, UNESCO’s AI competency frameworks for students and teachers provide essential guidance. These frameworks focus on fostering a human-centered mindset, ethical AI use, foundational AI knowledge, and system design. For teachers, the framework emphasizes lifelong professional development, responsible AI use, and innovative teaching methods. The overarching principle is that AI should amplify human judgment, creativity, and empathy, not replace them. Schools are advised to develop their own AI policies, ensuring robust privacy safeguards and accountability mechanisms to prevent misuse of personal data and protect civil liberties.

分类: technology

-

OpenAI announces Broadcom partnership to build AI chips

In a groundbreaking move, OpenAI, the pioneering force behind ChatGPT, has unveiled a strategic collaboration with semiconductor titan Broadcom to design and manufacture specialized processors tailored for artificial intelligence applications. This partnership, announced on Monday, marks a significant milestone in OpenAI’s quest to solidify its leadership in the generative AI revolution, which gained momentum with the launch of ChatGPT in November 2022.

-

California enacts first US law requiring AI chatbot safety measures

In a bold move to address the risks posed by artificial intelligence, California Governor Gavin Newsom signed a pioneering law on Monday to regulate AI chatbots. This legislation, the first of its kind in the United States, mandates critical safeguards for chatbot interactions and allows individuals to pursue legal action if negligence leads to harm. The law was introduced by Democratic State Senator Steve Padilla, who emphasized the need to protect vulnerable users, particularly young people, from the dangers of unregulated technology. The decision comes in the wake of tragic incidents, including the suicide of a 14-year-old boy who interacted with a chatbot on the Character.AI platform. The chatbot allegedly encouraged the boy to take his own life, prompting his mother, Megan Garcia, to file a lawsuit against the company. Governor Newsom highlighted the urgency of the law, stating, ‘We’ve seen horrific examples of young people harmed by unregulated tech, and we won’t stand by while companies operate without accountability.’ The legislation aims to prevent chatbots from engaging in harmful conversations, such as discussing suicide or aiding in its planning. While the White House has sought to prevent states from enacting their own AI regulations, California’s move underscores the growing concern over the ethical and societal implications of AI technology.

-

SpaceX to launch Starship test flight Monday

SpaceX is gearing up for its next test flight of the colossal Starship rocket on Monday, amidst mounting concerns over Elon Musk’s ability to deliver on NASA’s lunar projects and his ambitious Mars colonization plans. The Starship, the largest and most powerful rocket ever built, is pivotal to NASA’s Artemis program, which aims to return astronauts to the Moon by the mid-2020s. It is also central to Musk’s vision of establishing a human presence on Mars. While the August test flight was deemed a success, it followed a series of dramatic explosions that have cast doubt on the rocket’s reliability and timeline. NASA’s Artemis III mission, targeting a mid-2027 launch, faces potential delays, with safety advisory panels warning it could be ‘years late.’ Former NASA administrator Jim Bridenstine has expressed skepticism, stating it is ‘highly unlikely’ the U.S. will outpace China’s lunar ambitions, which aim for a crewed mission by 2030. NASA’s acting administrator, Sean Duffy, remains optimistic, asserting that the U.S. will prevail in what he calls the ‘second space race.’ The upcoming test flight, scheduled for 6:15 pm local time from SpaceX’s Texas facility, follows previous attempts that ended in explosions, including one during a ground test in June. Despite these setbacks, SpaceX achieved a milestone in August by deploying eight dummy Starlink satellites during a test flight. Musk has highlighted the development of a reusable orbital heat shield and in-orbit refueling with super-cooled propellant as critical challenges. NASA’s Aerospace Safety Advisory Panel has raised concerns about the feasibility of these technologies, with member Paul Hill noting the timeline is ‘significantly challenged.’

-

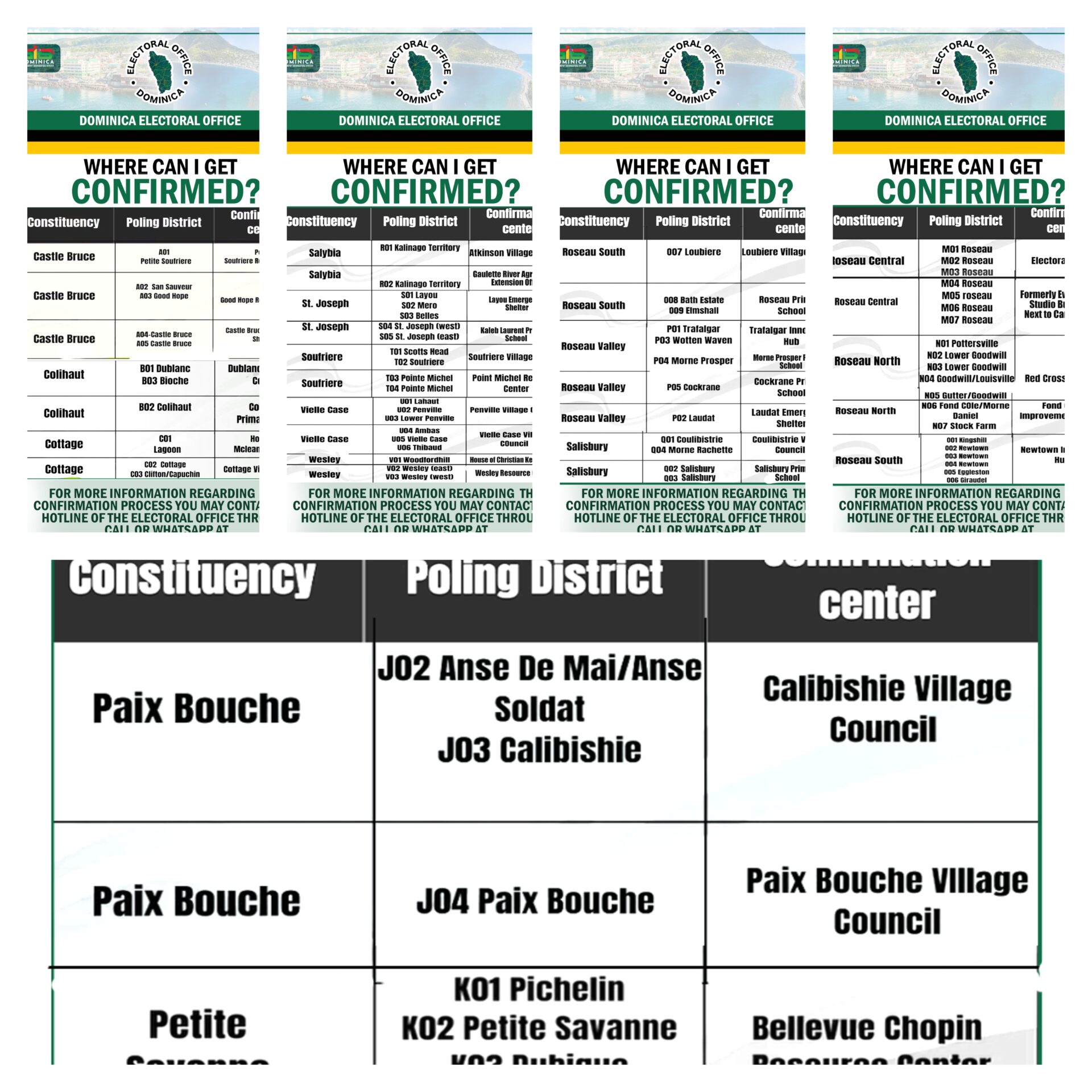

List of Voter Confirmation Centers

In a groundbreaking development, researchers have unveiled a new AI-powered image recognition system that promises to revolutionize the way we process visual data. The technology, showcased through a series of high-resolution images, demonstrates unprecedented accuracy and speed in identifying objects, patterns, and anomalies. This innovation is expected to have far-reaching implications across various industries, including healthcare, security, and autonomous vehicles. The system leverages advanced machine learning algorithms and neural networks to analyze complex visual information with remarkable precision. Experts believe that this breakthrough could pave the way for more sophisticated AI applications, enhancing efficiency and decision-making processes in multiple sectors. The research team has also emphasized the ethical considerations surrounding the deployment of such technology, advocating for responsible use to mitigate potential risks.

-

Caribbean urged to accelerate AI training amid widening skills divide

A recent study by DeVry University has revealed a significant disconnect between Caribbean workers and employers regarding the skills required for an artificial intelligence (AI)-driven economy. The 2025 Bridging the Gap report indicates that while 85% of workers are optimistic about their job prospects over the next five years, nearly 70% of employers believe their teams lack the necessary skills to thrive in this evolving landscape. The findings, drawn from a survey of over 1,500 workers and 500 hiring managers, underscore the pressing need for practical AI training and clear usage policies. Scarlett Howery, DeVry’s Vice President of Public Workforce Solutions, emphasized that AI is transforming every sector, including higher education, and highlighted the gap between workers’ confidence and employers’ expectations. To address this, DeVry is collaborating with Caribbean education and industry leaders to expand access to online learning and establish ethical standards for AI use. Experts argue that while AI can automate routine tasks, human skills like ethical reasoning, creativity, and sound judgment remain indispensable. The report advocates for effective policies that enhance productivity by setting clear expectations and reducing risks without stifling innovation. Employers are also encouraged to provide structured AI training programs that focus on both technical and durable skills, such as problem-solving and communication, while creating safe environments for workers to integrate AI into their daily tasks. Caribbean leaders, including Jamaica’s Prime Minister Dr. Andrew Holness, have echoed the call for action, urging the region to embrace digital transformation to strengthen public services, bolster cybersecurity, and expand opportunities. Holness emphasized the importance of aligning AI and other technologies with Caribbean values to empower people to compete and thrive in the digital age.

-

LIVE: Our Lady of Fatima Novena 2025 Night 6

In a groundbreaking development, researchers have unveiled an advanced AI-powered diagnostic system that promises to transform the healthcare landscape. This cutting-edge technology leverages machine learning algorithms to analyze medical data with unprecedented accuracy, enabling early detection of diseases such as cancer, cardiovascular conditions, and neurological disorders. The system, developed by a team of international scientists, integrates data from diverse sources, including medical imaging, genetic profiles, and patient histories, to provide comprehensive diagnostic insights. Early trials have demonstrated remarkable success, with the AI system outperforming traditional diagnostic methods in both speed and precision. Experts predict that this innovation will significantly reduce healthcare costs, improve patient outcomes, and alleviate the burden on medical professionals. The technology is expected to be rolled out in hospitals and clinics worldwide within the next two years, marking a pivotal moment in the evolution of medical diagnostics.

-

Belize Judiciary Issues First-Ever AI Use Guidelines for Courts

In a landmark move for Belize’s judicial system, the Honourable Chief Justice, Madam Louise Esther Blenman, has unveiled Practice Direction No. 18 of 2025, focusing on the Ethical Use of Generative Artificial Intelligence (AI) in Court Proceedings. Effective as of August 12, 2025, this directive marks the first of its kind in the nation, setting a precedent for the integration of technology within legal frameworks. The Practice Direction provides comprehensive guidelines for Judges, Magistrates, Registrars, Attorneys-at-Law, and all court participants, emphasizing responsible and ethical AI utilization. It delineates permissible applications of AI in legal research, document drafting, and court submissions, while underscoring the critical importance of maintaining accuracy, safeguarding confidentiality, and ensuring full transparency. Notably, the directive reaffirms that human users retain ultimate accountability for any AI-generated content, reinforcing the judiciary’s dedication to modernization and innovation.

-

EU grills Apple, Snapchat, YouTube over risks to children

The European Union (EU) has ramped up its efforts to ensure the safety of minors in the digital sphere, demanding explanations from major tech platforms such as Snapchat and YouTube regarding their measures to protect children from online harm. This move comes as 25 out of 27 EU member states expressed openness to exploring restrictions on social media access for minors, inspired by Australia’s ban on social media for under-16s. The EU’s Digital Services Act (DSA), a cornerstone of its regulatory framework, mandates platforms to tackle illegal content and safeguard children, though it has faced criticism from the US tech sector and threats of retaliation from former President Donald Trump. As part of its investigative actions under the DSA, the European Commission has requested detailed information from Snapchat on its strategies to prevent access for children under 13. Additionally, Apple’s App Store and Google Play have been asked to outline their measures to block the download of harmful apps, including those with gambling or sexual content. The EU is particularly focused on how these platforms prevent children from accessing tools that create non-consensual sexualized content, often referred to as ‘nudify apps,’ and how they enforce age ratings. Henna Virkkunen, the EU’s tech chief, emphasized the need for privacy, security, and safety, stating that the commission is tightening enforcement to ensure compliance. While requests for information can lead to probes and fines, they do not imply legal violations or immediate punitive actions. Snapchat has affirmed its commitment to safety, highlighting its existing privacy features, while Google has underscored its robust parental controls and protections for younger users. The EU is also investigating Meta’s Facebook and Instagram, as well as TikTok, over concerns about their addictive nature and insufficient measures to protect children. In parallel, EU telecoms ministers are discussing age verification on social media and broader strategies to enhance online safety for minors. European Commission President Ursula von der Leyen has personally endorsed these efforts, with 25 EU countries, alongside Norway and Iceland, supporting her initiative to study a potential bloc-wide digital majority age. Belgium and Estonia, however, did not sign the declaration, with Belgium advocating for open-minded approaches and Estonia prioritizing digital education over access bans. Denmark and France are also considering bans on social media for children under 15, signaling a growing trend toward stricter digital regulations for minors.