TORONTO – In a major legal development following one of Canada’s deadliest mass shootings in recent years, seven new federal lawsuits have been lodged against OpenAI in a California court by legal representatives of victim families connected to the February attack in the small British Columbia mining town of Tumbler Ridge.

The litigation centers on the AI developer’s controversial handling of account activity linked to 18-year-old Jesse Van Rootselaar, the perpetrator of the attack that left eight people dead and multiple others seriously injured. After the shooting, OpenAI faced widespread public backlash over its choice not to alert Canadian law enforcement to concerning behavior detected on Van Rootselaar’s ChatGPT account, which the company said it banned in June 2025 – months before the attack. In the immediate aftermath, OpenAI defended its inaction, claiming there was no clear evidence of an imminent violent plot that would trigger a report to authorities.

The new lawsuits challenge multiple core claims made by OpenAI, according to official statements from the plaintiffs’ cross-border legal team. Legal representatives allege that OpenAi deliberately chose not to report Van Rootselaar’s activity, arguing that flagging one high-risk account would create an obligation to flag thousands of similar concerning cases across the platform. Beyond this, the suits cast doubt on OpenAI’s assertion that Van Rootselaar’s original account was ever fully banned.

The legal filing details longstanding gaps in OpenAI’s account safety protocols, claiming that when users are locked out for dangerous conduct, the company actively provides guidance on how to restore access – including workarounds to bypass mandatory 30-day suspension periods. Even for permanently banned users, the suit notes OpenAI does not block repeat sign-ups: the company explicitly informs users that they can create a new account immediately simply by registering with a different email address. Per court documents, Van Rootselaar did exactly that, launching a new ChatGPT account after her first was restricted.

This new wave of US litigation follows an earlier Canadian case brought on behalf of Maya Gebala, a 12-year-old victim who was gravely wounded in the school shooting. Legal teams confirmed they are coordinating across the US-Canada border, and the new US filings will supersede the existing Canadian action. Legal representatives also signaled that more lawsuits are imminent, saying that over two dozen additional claims on behalf of shooting victims will be filed in batches over the coming weeks.

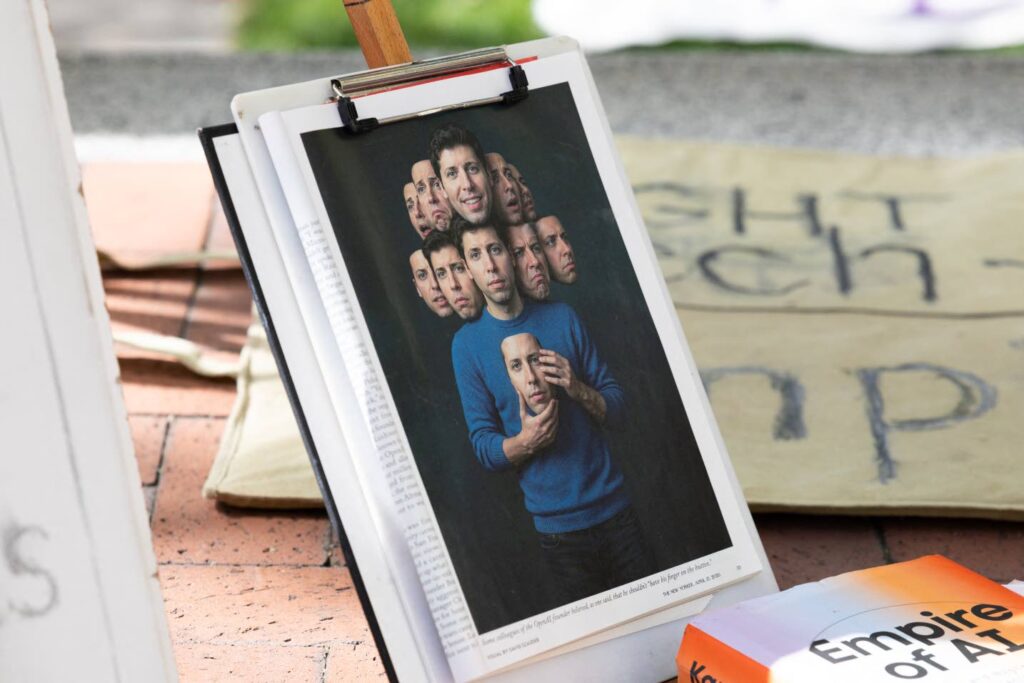

OpenAI has already taken public steps to address fallout from the incident. Earlier this month, CEO Sam Altman issued a direct public apology to the Tumbler Ridge community, saying he was “deeply sorry that we did not alert law enforcement to the account that was banned in June”. The company has also confirmed that it has revised its safety policies since the incident, acknowledging that under current updated protocols, Van Rootselaar’s behavior would now trigger an automatic flag to police.

When contacted for comment on Wednesday’s new filings, an OpenAI spokesperson reiterated the company’s commitment to preventing misuse of its tools. “We have a zero-tolerance policy for using our tools to assist in committing violence. As we shared with Canadian officials, we have already strengthened our safeguards, including improving how ChatGPT responds to signs of distress,” the spokesperson said.

The attack itself has remained one of the most high-profile cases examining the responsibility of AI platforms for user-generated dangerous content. Van Rootselaar first killed her mother and brother at their family home in Tumbler Ridge, before traveling to the town’s local secondary school, where she shot and killed five students and one teacher. She ultimately died from a self-inflicted gunshot wound after responding police entered the school building.